Article

How to Build Trust Around Data Privacy in AI-Powered Workflows

"We can't risk our data with AI."

If you've worked in enterprise sales or consulted with regulated industries, you've heard some version of this sentence dozens of times. Every organization wants the efficiency gains that AI-powered workflows deliver. Until the words "data privacy" and "compliance" stop the conversation cold.

"We can't risk our data with AI."

If you've worked in enterprise sales or consulted with regulated industries, you've heard some version of this sentence dozens of times. Every organization wants the efficiency gains that AI-powered workflows deliver. Until the words "data privacy" and "compliance" stop the conversation cold.

The concern is legitimate. If you're in insurance, finance, healthcare, or any regulated industry, the stakes are real. One misstep with sensitive data isn't just a technical problem. It's a legal, reputational, and operational crisis.

The mistake most AI vendors make is trying to hand-wave past these concerns. "Trust the AI" isn't a compliance strategy. Enterprises need proof, not promises.

What Enterprise-Grade AI Security Actually Requires

There are baseline requirements that any enterprise should demand before deploying AI in production workflows.

SOC 2 Type II compliance is table stakes. It means the platform has been independently audited for security, availability, and confidentiality controls. If your AI vendor can't produce this certification, that's a red flag.

Private cloud or on-premise deployment gives you control over where your data lives and who has access to it. For regulated industries, this isn't optional. Data residency requirements exist for good reasons, and your AI platform needs to respect them.

Full audit trails mean every action the AI takes is logged, traceable, and reviewable. When compliance auditors ask what happened to a specific document or data point, you need a clear answer. No black boxes.

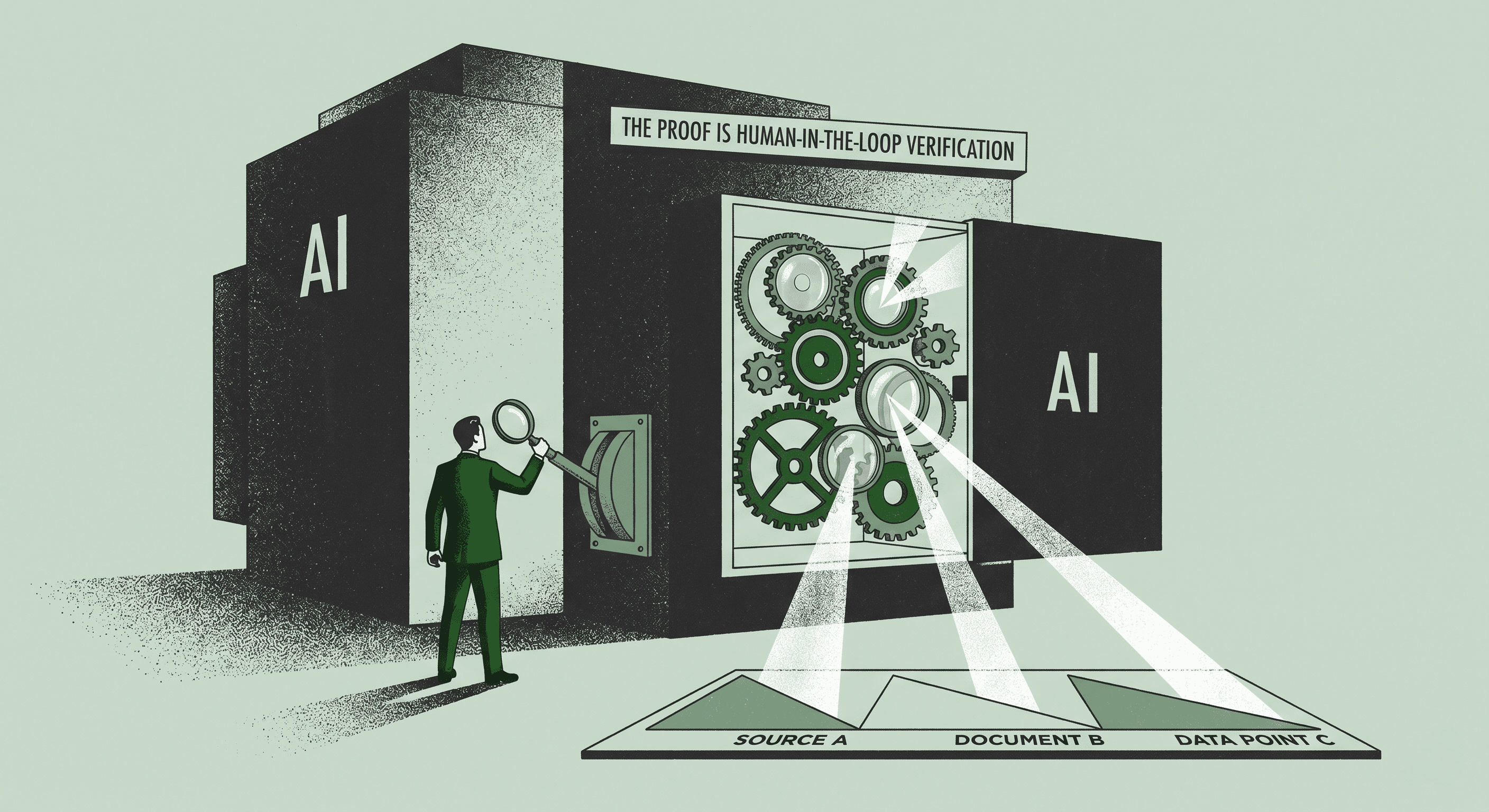

Human-in-the-Loop Is Not Optional

The most important trust-building mechanism in AI workflows is human-in-the-loop verification. This means inserting human review points at critical stages of automated processes.

AI citations for every data point and decision eliminate the black box problem entirely. When the system tells you something, it shows you exactly where that information came from. You can verify it. You can challenge it. You can trust it because you can see the receipts.

This isn't about slowing things down. It's about making automation trustworthy enough to use for work that matters. The alternative, fully autonomous AI making decisions on sensitive data with no oversight, is what gives CISOs nightmares.

A Real-World Example

I recently worked with a major insurer that was firmly against using AI for document workflows. Their biggest fear was losing control over proprietary data and failing an audit.

We didn't try to convince them with features or promises. Instead, we showed them native citations that traced every output back to its source. Configurable deployment options that kept their data within their infrastructure. Real-time human review capabilities that let their team verify every automated decision before it became final.

The conversation shifted from "no way" to "how soon can we start."

Building Trust the Right Way

Trust in AI isn't built on marketing materials or demo environments. It's built on three things.

Proof that the security controls are real and independently verified. Transparency into every decision the AI makes and every data point it touches. Control that keeps your team in the driver's seat at every critical juncture.

If you're evaluating AI platforms for regulated workflows, don't settle for "trust us." Demand to see the audit trails, the deployment options, and the human oversight mechanisms. The right platform will welcome that scrutiny.

The companies that figure out how to deploy AI responsibly won't just save time. They'll build a competitive advantage rooted in speed and trust simultaneously.