Article

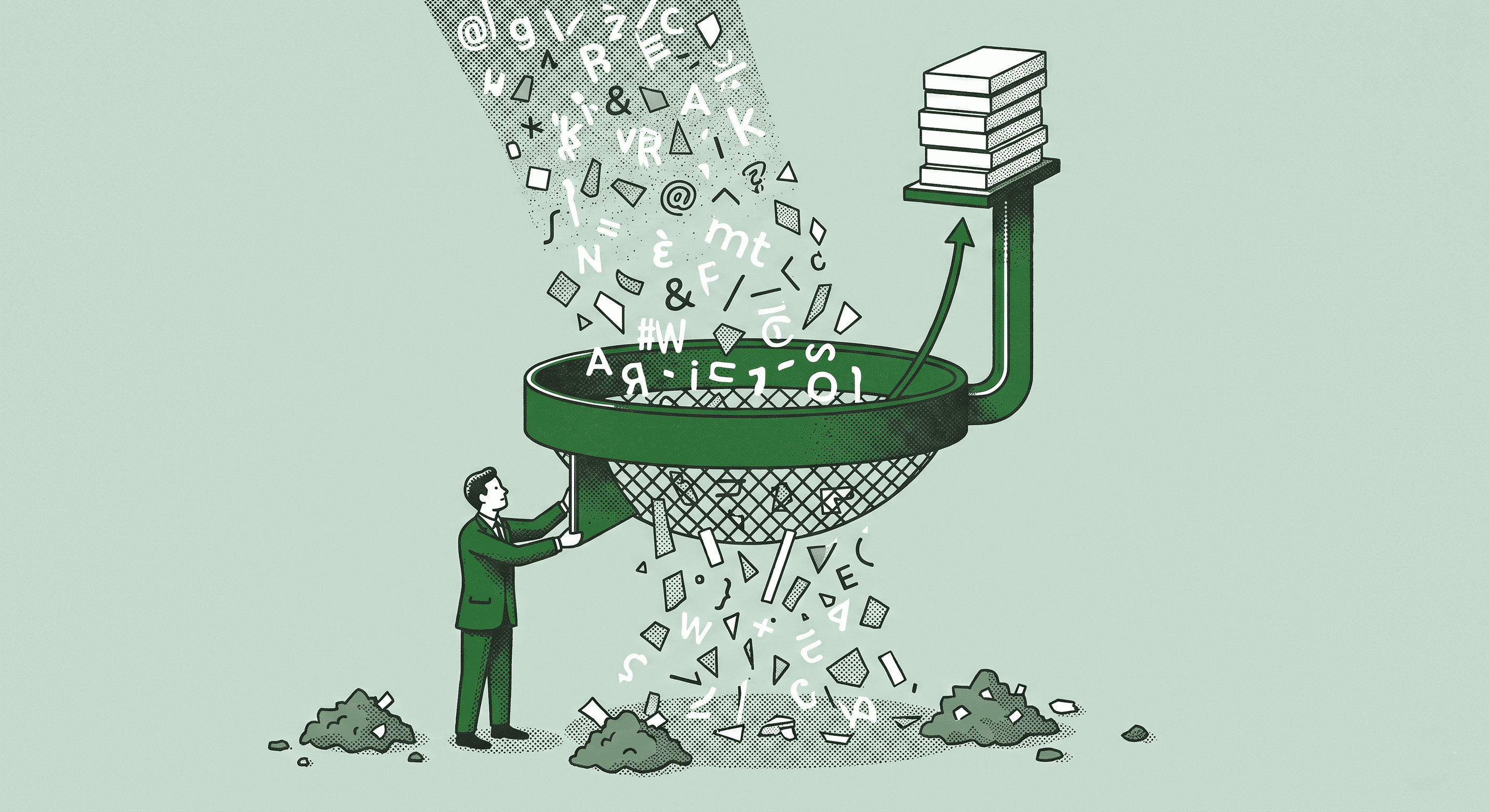

The Future of AI Isn't More Data It's Better Data.

Most teams are drowning in data, not starving for it. Feeding AI systems more of the same won't magically make them smarter.

The real edge isn't volume. It's how you pick, structure, and use what you already have.

The next wave of AI isn't about quantity. It's about quality, context, and control.

The Problem With More Data

The conventional wisdom in AI has been straightforward for years. More data equals better models. More training examples equals better outputs. Scale your dataset and performance follows.

That logic breaks down in practice for most business applications. You don't need a billion rows of generic data. You need clean, structured, contextually relevant data that reflects your actual business operations.

Most teams I work with have plenty of data. It's scattered across 15 tools, formatted inconsistently, and mixed with noise that actively degrades AI performance. Adding more data to that pile doesn't help. It makes things worse.

Quality Over Quantity in Practice

The shift toward data quality means rethinking how you prepare information for AI consumption.

It starts with extraction. Modern platforms can pull structured data from any document type, including PDFs, emails, images, handwritten notes, and database exports. The format of your source material no longer limits what you can do with it.

Then comes structuring. Raw data needs to be organized in a way that AI can reliably process. That means consistent schemas, clean labels, and clear relationships between data points. This is the unsexy work that separates reliable AI from unreliable AI.

Finally, there's the trust layer. AI citations, human-in-the-loop validation, and multimodal document understanding ensure your outputs are auditable, trustworthy, and ready for real-world decisions. When you can trace every output back to its source, you can trust the system enough to act on its recommendations.

What This Means for Your Business

For most growing startups, the practical implications are significant.

Faster deployment. When your data is clean and structured, new AI workflows can be built and tested in days instead of months. You're not spending weeks cleaning data before you can even start.

Airtight compliance. Structured data with full audit trails makes regulatory requirements manageable. You can demonstrate exactly what data went into every decision and output.

Seamless integration. Clean data connects to existing systems without the usual technical headaches. No more six-month onboarding sagas to get a new tool working with your stack.

The Strategic Advantage

The future isn't about building bigger haystacks. It's about finding the needles faster and knowing exactly why you picked them.

The companies that invest in data quality now are building a compounding advantage. Every AI workflow they deploy performs better because the foundation is solid. Every new use case is faster to implement because the data infrastructure already exists.

Meanwhile, teams that keep throwing more unstructured data at AI models will keep getting inconsistent results and wondering why the technology doesn't live up to the hype.

If your AI implementations feel unreliable, the problem probably isn't the model. It's the data going into it. Fix the inputs and the outputs take care of themselves.