Article

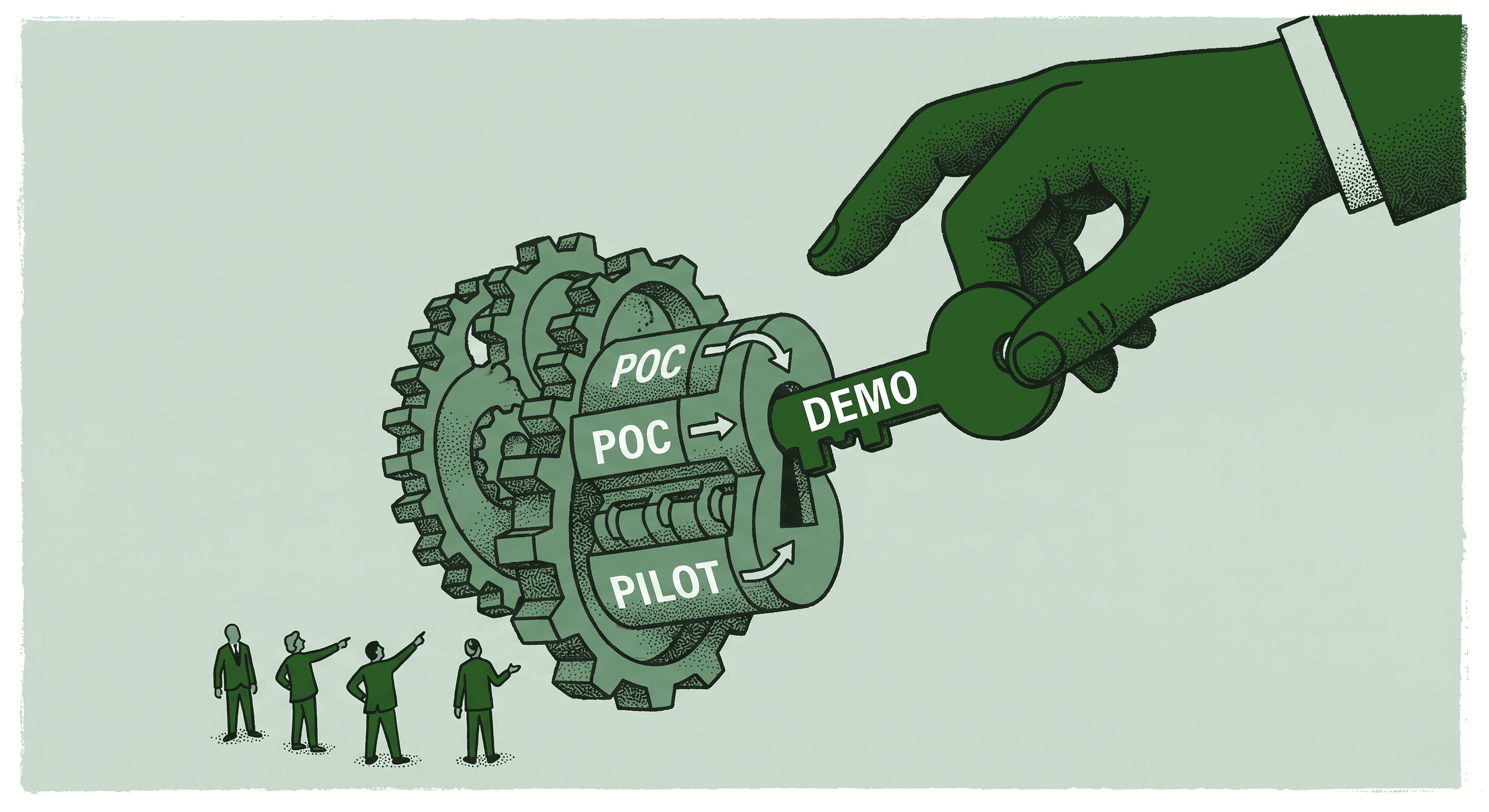

The Real Difference Between a Demo, a POC, and a Pilot in AI Projects

Most AI projects stall because teams mix up three distinct stages of evaluation. If you've ever sat through a flashy demo and then wondered why your real-world results lagged months behind, you're in sizable company.

The problem isn't the technology. It's the expectations.

Expecting a demo to prove production readiness is like expecting a test drive to tell you about long-term maintenance costs. Each stage of AI evaluation serves a different purpose, and treating them interchangeably creates confusion, wasted time, and frustrated stakeholders.

Three Stages, Three Purposes

A demo is "show me what's possible." It's a tailored walkthrough designed to demonstrate capability. The data is curated. The conditions are controlled. The goal is to help you understand what the platform can do and whether it's worth investigating further. A demo is not evidence that the tool works on your data, in your environment, with your edge cases.

A POC is "prove it works on my data." This is a small, controlled test using your actual documents, your actual workflows, and your actual success criteria. The scope is limited intentionally. You're validating that the technology can handle your specific use case before committing to a broader rollout. A good POC has clear metrics defined upfront and a timeline measured in days, not months.

A pilot is "let's see it run in the wild." Real users. Real documents. Real stakes. A pilot tests the solution under production conditions with all the messiness that implies. Edge cases surface. Integration challenges appear. User adoption patterns emerge. This is where you learn whether the tool actually works as part of your daily operations.

Why This Clarity Matters

When teams skip stages or blur the boundaries between them, predictable problems follow.

A team that expects demo-level polish from a POC gets frustrated when edge cases appear. A team that treats a POC like a pilot doesn't invest enough in change management and adoption planning. A team that jumps straight from demo to pilot misses the controlled validation step that catches deal-breaking issues early.

The fastest teams I work with treat each stage as a system, not a checkbox. They define success criteria before each stage begins. They know exactly what they're testing and what decisions the results will inform.

This is the difference between a two-week win and a six-month headache.

Moving Through the Stages Quickly

The traditional timeline for enterprise AI evaluation is painfully slow. Months of demos. Quarters of POCs. A pilot that drags on indefinitely without a clear go or no-go decision.

That timeline is no longer necessary. With modern no-code AI platforms, human-in-the-loop workflows, and rapid configuration capabilities, you can move from demo to POC to pilot in days instead of months.

The key is setting the right expectations at each stage. Know what you're testing. Know what success looks like. Make the decision and move forward.

The Takeaway

Stop expecting a demo to do a pilot's job. Set the right expectations at each stage, and you'll accelerate decision-making, reduce risk, and deliver value before your next quarterly review.

The organizations that evaluate AI well don't move slowly because they're being careful. They move quickly because they're being clear about what each stage is supposed to prove.