Article

Why Step-by-Step Agents Outperform One-Shot LLMs Every Time

The latest AI models feel like magic. You can one-shot problems with a single prompt in five minutes that used to take experts hours to solve.

It feels incredible when it works. The problem is what happens when it doesn't.

The latest AI models feel like magic. You can one-shot problems with a single prompt in five minutes that used to take experts hours to solve.

It feels incredible when it works. The problem is what happens when it doesn't.

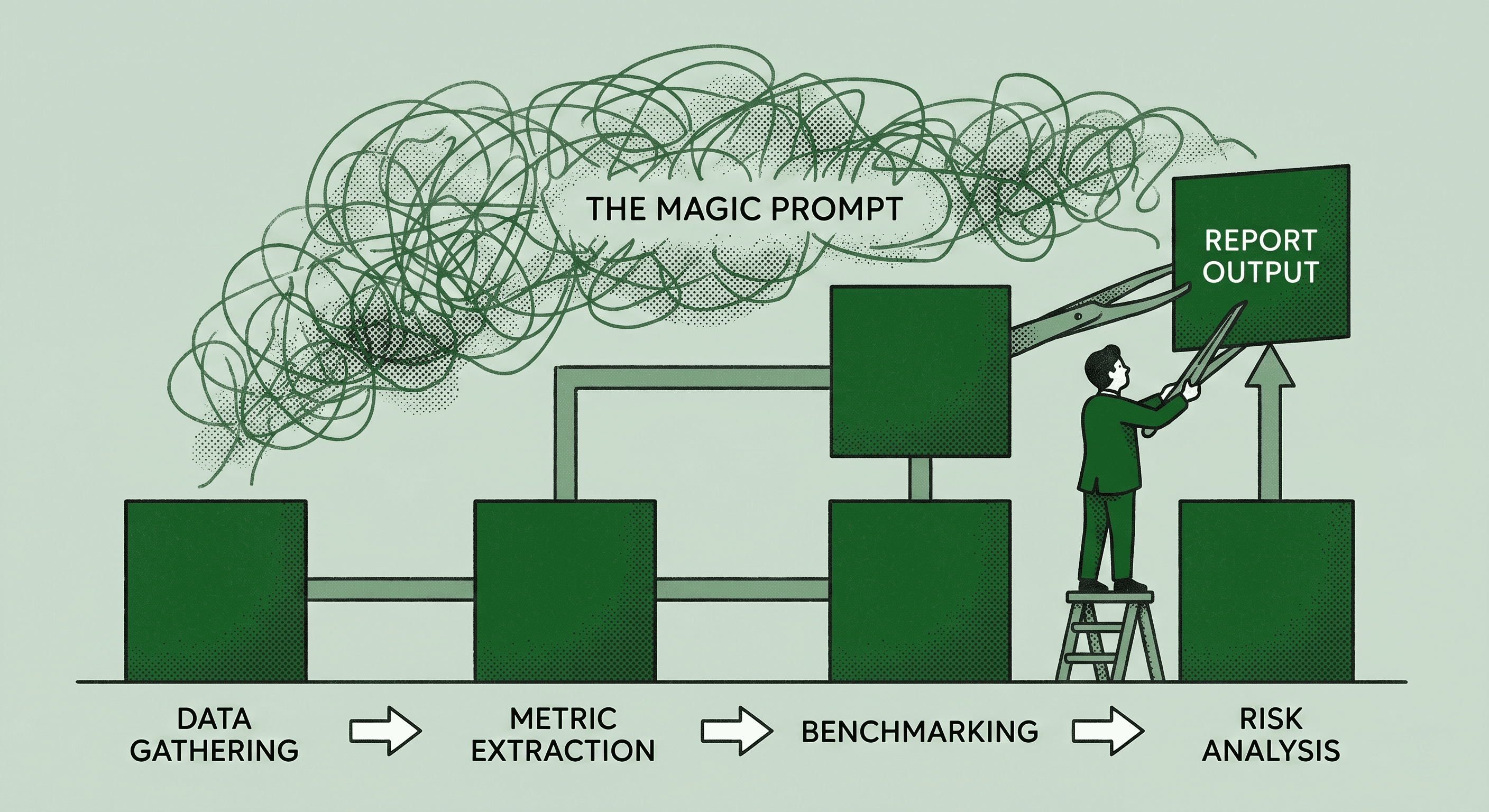

Unpredictable results. Zero traceability. Zero auditability. A black box you can't debug. That's the reality of relying on single-prompt magic for anything that matters in production.

Complex workflows like systems audits, compliance checks, or due diligence demand more than a clever prompt. They need structure, logic, and the ability to adapt when things get messy.

Real operations demand step-by-step agents, not magic one-shots.

The Shift From Prompt Engineering to System Engineering

The AI industry has spent two years optimizing prompts. That was the right focus when the technology was new and we were figuring out what it could do. But we've moved past that phase.

The companies getting real value from AI in production aren't writing better prompts. They're engineering better systems.

That means breaking complex goals into discrete, manageable steps. Each step has a clear input, a defined process, and a measurable output. When one step fails, you can identify exactly where things went wrong without tearing apart the entire workflow.

It means using visual logic to chain text, files, and decision blocks clearly. When you can see the entire flow laid out, you can reason about it, debug it, and improve it systematically.

It means inserting human-in-the-loop checkpoints where accuracy is non-negotiable. Not every step needs human oversight. But the high-stakes decisions absolutely do.

Why Chaining Steps Wins

Chaining together discrete workflow steps is consistently more reliable than asking a model to handle everything at once. Here's why.

You can audit every decision. When each step produces a visible output, you have a complete trail of how the system arrived at its conclusion. This matters for compliance, quality control, and trust.

You can fix specific failures without breaking the whole chain. If step four produces bad output, you fix step four. You don't have to rewrite the entire prompt and hope you didn't break steps one through three in the process.

You can scale from one run to a thousand runs without sweating. Deterministic steps produce consistent results regardless of volume. Single prompts produce increasingly variable results as the complexity of the input grows.

What This Looks Like in Practice

Take a company analysis workflow as an example. Instead of asking an LLM to "analyze this company and give me a report," you break it into structured steps.

Step one gathers the raw data from defined sources. Step two extracts key financial metrics into a standardized format. Step three compares those metrics against industry benchmarks. Step four identifies risks and opportunities based on the comparison. Step five generates the final report in a consistent template.

Every run produces the same structure. Every run is auditable. Every run can be improved by optimizing individual steps without touching the rest.

This is system engineering applied to AI. It's less exciting than magic one-shot demos. It's also the only approach that delivers consistent, compliant, and scalable automation at any meaningful volume.

The Bottom Line

AI gives us powerful shortcuts. But shortcuts aren't always solutions.

The teams building reliable AI systems aren't chasing the next model release or writing longer prompts. They're doing the disciplined work of breaking complex problems into structured steps, testing each step independently, and building systems that produce consistent results every time.

Stop looking for shortcuts. Start building systems.