Article

Workflows, AI Augments, and AI Agents — What Actually Makes a Quality Agent

Most "AI agents" don't fail because the model is weak. They fail because the workflow underneath them is sloppy.

I've built over 100 AI agents since joining an AI startup last year. The pattern is consistent. The teams that succeed with AI aren't the ones using the most advanced models. They're the ones who put in the unglamorous work of designing clean, structured workflows before they ever plug in a language model.

Most "AI agents" don't fail because the model is weak. They fail because the workflow underneath them is sloppy.

I've built over 100 AI agents since joining an AI startup last year. The pattern is consistent. The teams that succeed with AI aren't the ones using the most advanced models. They're the ones who put in the unglamorous work of designing clean, structured workflows before they ever plug in a language model.

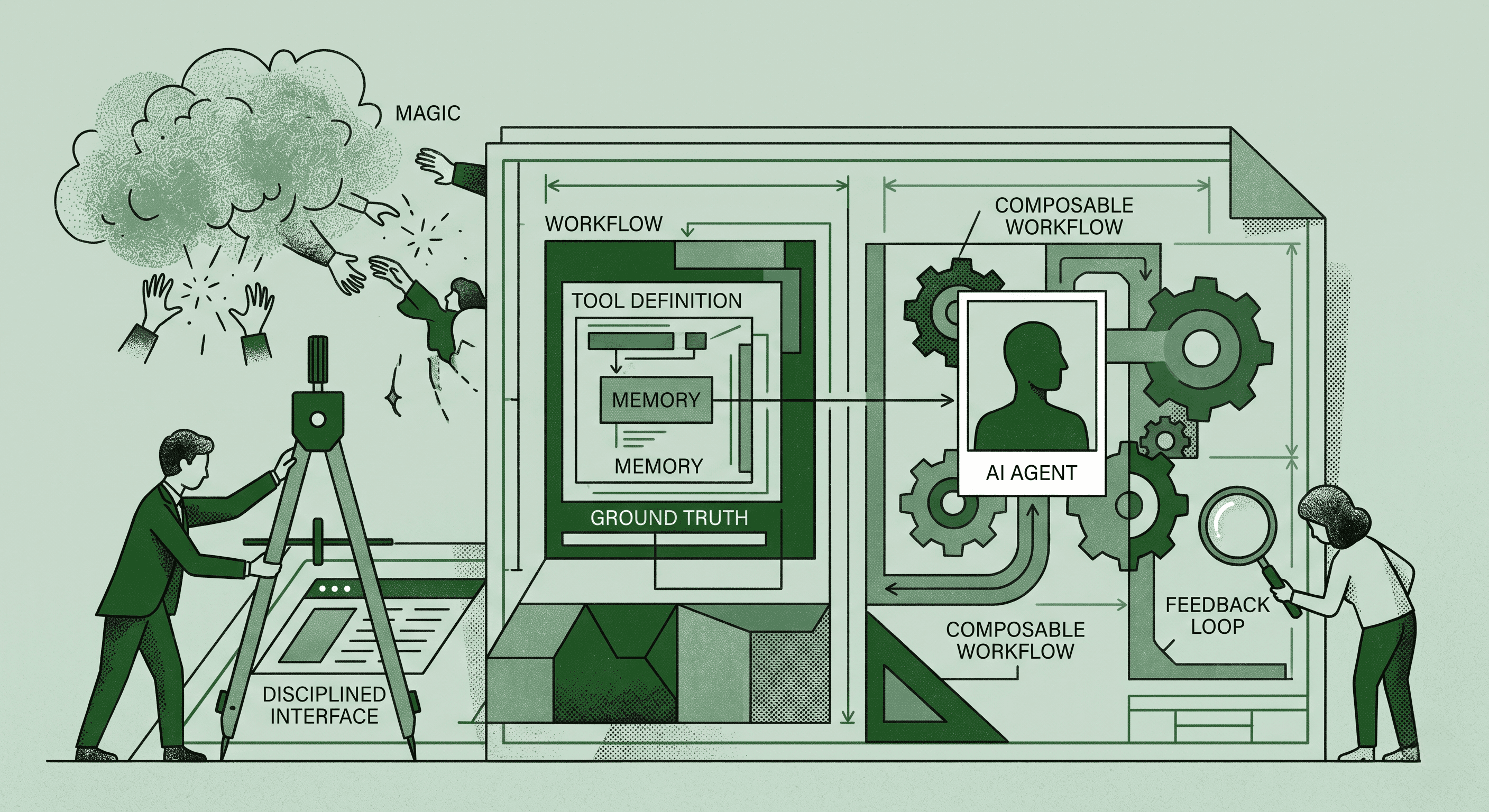

The AI industry talks about agents like they're magic. They're not. They're composable workflows with disciplined interfaces. Understanding the spectrum of what's available is the first step to building something that actually works.

The Three Categories of AI-Era Automation

You can reliably break down automation in the AI age into three categories. Each one has a place, and knowing which to use is half the battle.

Automated Workflows are the most structured option. You hardcode the entire path. The system follows a defined sequence every time. This includes prompt chaining, routing, parallelization, and orchestrator-worker patterns. There's no AI decision-making in the flow itself. It's deterministic, predictable, and reliable.

AI Augmented Workflows sit in the middle. You hardcode the majority of the path, but AI steps into specific sections to add context and content dynamically. A human designs the process. AI handles the parts that benefit from flexibility. Think of it as a structured pipeline with smart inserts.

AI Agents are the most autonomous option. The model dynamically decides the next step and tool usage based on input context, memory, available tools, and instructions you've provided. This is powerful, but it's also where most implementations go wrong.

The Pattern Across Strong Implementations

The pattern across successful AI agent builds is boring in the best way.

Start with the augmented LLM. Give it retrieval capabilities, tools, and memory. Get that working reliably before you add complexity.

Then layer in additional capability only when it measurably improves outcomes. Not because it seems cool. Not because a demo looked impressive. Only when you can prove it makes the output better.

Design for transparency at every step. Explicit planning steps let you see what the agent is thinking and catch errors before they compound. Black-box agents that "just figure it out" are the ones that fail unpredictably in production.

Build a Great Agent-Computer Interface

This is where most teams underinvest. The interface between your agent and the tools it uses needs to be incredibly clear.

That means precise tool definitions. Concrete examples for every tool call. Edge cases documented. Constraints spelled out explicitly. If your agent doesn't know exactly what each tool does and when to use it, it will make bad decisions at scale.

Think of it like onboarding a new employee. If you hand them vague instructions and expect them to figure it out, they'll make mistakes. If you give them clear documentation, examples, and guardrails, they'll perform reliably from day one.

Test Like It Matters

Finally, test in sandboxed environments and build "ground truth" feedback loops so the system can recover from errors.

This isn't optional. Every agent will make mistakes. The difference between a good agent and a bad one is whether those mistakes get caught, corrected, and prevented from recurring.

Quality agents aren't magic. They're composable workflows plus disciplined interfaces. Start simple, add complexity only when earned, and never skip the fundamentals.